The AI Convergence: Why Every Coding Tool Lands on the Same Two Defaults

Open your AI coding tool. Type "build me a landing page with a contact form." Don't specify a language. Don't specify a framework.

What you get back depends entirely on where you asked.

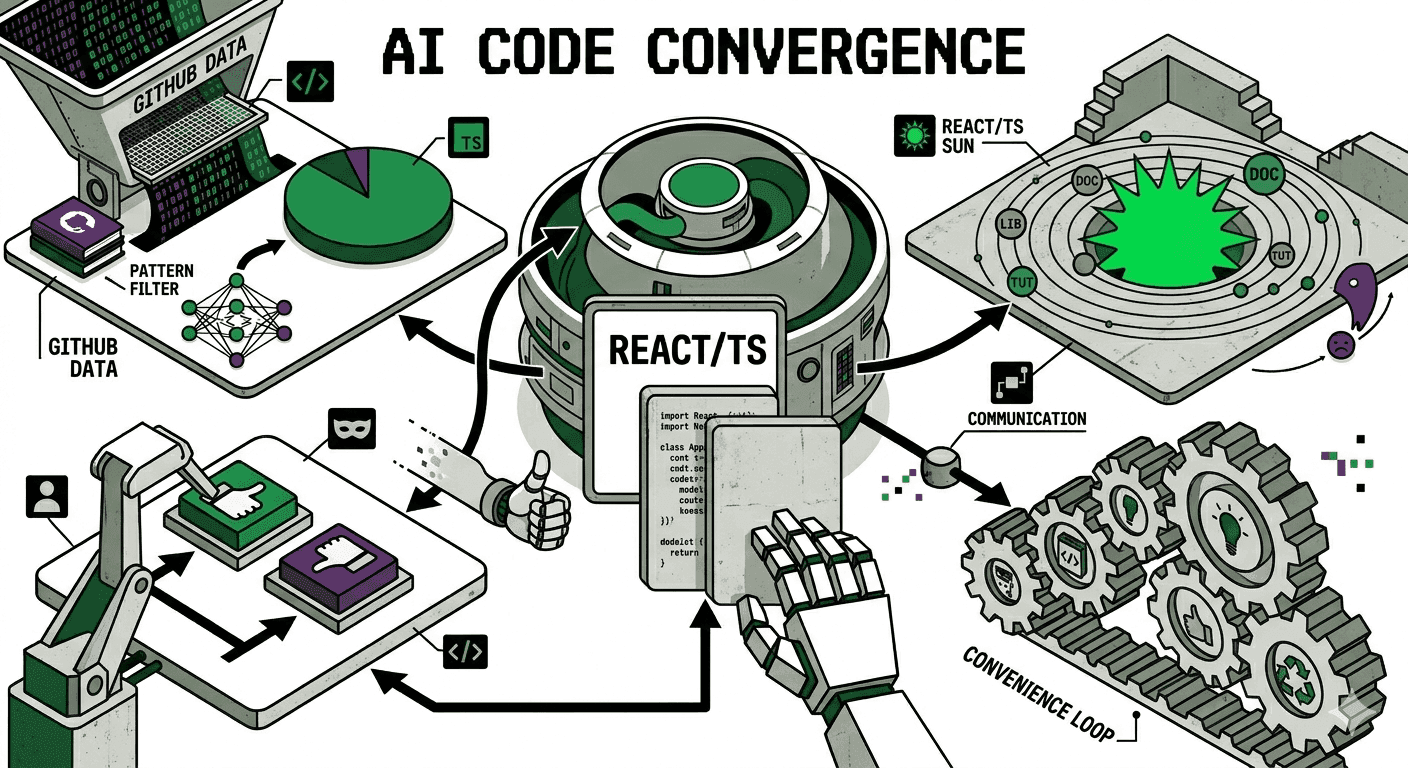

Two defaults. Same model underneath. Completely different outputs. This isn't random -- it's the output of a deeply mechanical set of forces driven by runtime constraints, compilation pipelines, training dynamics, and something GitHub recently called a "convenience loop."

The question isn't just "why TypeScript?" It's "why does the tool's delivery mechanism dictate the entire technology stack?"

The Training Data Problem: LLMs Are Regurgitation Machines with Extrapolation

To understand why AI tools default to TypeScript, you first need to understand how LLMs learn to write code. They don't reason about programming languages the way a human compiler engineer would. They learn statistical patterns from massive corpora of public code. The better a language is represented in that corpus, the better the model's output quality, pattern matching, and error recovery will be.

AI's ability to write code in a language is proportional to how much of that language it's seen. They're big regurgitators, with some extrapolation.

”That's not an insult -- it's an architectural description. And what has every major LLM seen most of?

JavaScript and Python, historically. But as of August 2025, TypeScript overtook both to become the #1 most-used language on GitHub.

The corpus shifted. And the models follow the corpus.

When an AI tool doesn't know what language you want, it defaults to the one where it has the highest confidence, the richest pattern library, and the lowest probability of generating broken output. For web-adjacent tasks right now, that's TypeScript.

The Type System as a Structured Prompt

Here's the part most people miss.

TypeScript doesn't just benefit LLMs through training data volume. It benefits them through structural information density. When a model reads TypeScript code, every function signature, every interface definition, every generic constraint is a piece of machine-readable contract.

The TypeScript version tells the model: what kind of argument userId is (a branded type, probably not a raw string), what shape options expects, and what structure the return array contains. The model doesn't need to infer this from variable naming conventions or surrounding code. The type system is literally an in-line specification.

This maps directly to how transformers work. They predict the most probable next token given context. Richer semantic context means higher probability of correct prediction means better code output. TypeScript's type system is, in a very real sense, structured context injection built into the language syntax itself.

Static typing doesn't just catch errors -- for AI-generated code specifically, it defines the entire boundary between working and broken.

The Compiler as a Free Feedback Loop

LLMs produce code in iterations. Even the best AI coding assistants operate in a guess-check-refine loop. The question is: what is the checking mechanism?

In a dynamically typed language, the feedback mechanism is runtime. You write the code, you run it, something explodes, you feed the error back to the model. That cycle might be 10-30 seconds per iteration, longer if the bug is a silent logic error that only surfaces in specific execution paths.

In TypeScript, the feedback mechanism is compile-time.

The TypeScript compiler acts as a constant, zero-latency validator. Type errors surface immediately, they're localized to specific lines, and they carry enough semantic information for a model to self-correct in the same context window.

Dynamic (JS/Python)

Static (TypeScript)

This matters because the tighter the feedback loop, the fewer iterations needed to converge on correct code. TypeScript's type system plus a modern test runner creates a dual feedback architecture: static typing catches structural mistakes in milliseconds, tests catch behavioral mistakes in seconds. Neither exists in raw JavaScript.

From a systems perspective: TypeScript compresses the iteration cycle. For an AI coding tool trying to maximize output quality per token spent, that compression is extremely valuable.

Ecosystem Gravity and Framework Defaults

This is the more mundane but equally important force. TypeScript doesn't just win on theoretical merits -- it wins because the entire modern web framework ecosystem scaffolds in TypeScript by default.

Next.js, Astro, Angular, NestJS, Vite, the AWS CDK, Pulumi -- all TypeScript by default. When an AI tool is trained on recent GitHub code, it's overwhelmingly reading TypeScript project structures, TypeScript configuration files, TypeScript component patterns.

If you ask it to scaffold a new project without specifying a language, it's going to reproduce the most statistically common pattern it's seen. Which is, now, TypeScript.

This is a compounding effect. Frameworks default to TypeScript. Developers write TypeScript. More TypeScript on GitHub. Models train on TypeScript. Models default to TypeScript. Developers are more productive with AI in TypeScript. More TypeScript gets written. The loop feeds itself.

”The Two Defaults: Runtime Decides Everything

Here's where it gets interesting. The forces above -- training data, type system density, compiler feedback, ecosystem gravity -- all explain why TypeScript dominates. But they don't explain why Claude Desktop gives you a single HTML file when V0 gives you a full React + TypeScript component for the exact same prompt.

The answer isn't the model. It's the runtime.

The App Builders: React + TypeScript by Default

Platforms like V0, Bolt, Lovable, and Google AI Studio aren't just chat interfaces -- they're full development environments with embedded build pipelines. When you type a prompt into Bolt, here's what's actually running behind the scenes:

Prompt Received

The model interprets your request in the context of a React + TypeScript project scaffold that already exists.

Code Generated as TSX

The LLM outputs TypeScript React components because the platform has pre-configured the project as a Vite/Next.js app with TypeScript strict mode.

Bundler Compiles in Real-Time

Vite (or a similar bundler) runs in the background. TypeScript is transpiled, JSX is transformed, imports are resolved -- all in milliseconds.

Hot Module Replacement

The preview iframe updates instantly. The user sees the result without refreshing, without a terminal, without installing anything.

Type Errors Feed Back to the Model

If tsc catches an error, the platform feeds it back to the LLM automatically. The model self-corrects before you ever see the bug.

The platform can afford React + TypeScript because it ships the entire compilation pipeline. You never run npm install. You never configure tsconfig.json. You never touch a terminal. The build step is invisible -- but it's there, and it's doing the heavy lifting that makes TypeScript viable.

This is why these platforms default to React specifically. React's component model maps almost perfectly to how LLMs think about UI generation:

1// A component is a self-contained, composable unit

2// The model can generate one component at a time

3// Props are a typed contract between components

4// The tree structure maps to the DOM hierarchy

5

6interface ContactFormProps {

7 onSubmit: (data: FormData) => Promise<void>;

8 fields: FormField[];

9 submitLabel?: string;

10}

11

12export function ContactForm({ onSubmit, fields, submitLabel = 'Send' }: ContactFormProps) {

13 // Self-contained logic, typed inputs, predictable output

14 // This is exactly the kind of structure an LLM excels at generating

15}The component model is a natural fit for token-by-token generation. Each component is a bounded context with typed inputs and predictable outputs -- exactly what transformers are optimized to produce. React's composability means the model can build complex UIs piece by piece without losing coherence.

”The Chat Interfaces: HTML + CSS Because There's No Build Step

Now contrast that with Claude Desktop, Claude Web, Gemini Chat, or ChatGPT. These tools have a fundamentally different constraint: the output has to work the moment the user copies it.

There's no Vite running in the background. There's no node_modules. There's no TypeScript compiler. The user is going to take that output and either:

- Save it as a

.htmlfile and double-click it - Paste it into CodePen or JSFiddle

- Serve it with

python -m http.server

In every case, the code must execute with zero compilation. That constraint eliminates TypeScript entirely -- browsers don't run .ts files. It eliminates JSX -- browsers don't parse <Component /> syntax. It eliminates npm imports -- there's no package resolution.

What's left? The web's native stack: HTML, CSS, and vanilla JavaScript.

The Decision Tree

This isn't a preference. It's a constraint-driven decision:

Notice the third branch: tools like Claude Code and Cursor sit in the middle. They have access to the user's local terminal and file system. They know if you have Node.js installed, if there's a package.json, if TypeScript is configured. So they default to TypeScript + whatever framework your project already uses -- because they can verify the build pipeline exists.

The model's "preference" for TypeScript hasn't changed across any of these. What changes is the delivery constraint. A React component with TypeScript is objectively better output -- more maintainable, more composable, more type-safe. But "better" doesn't matter if the user can't run it. And a single HTML file that opens in any browser, on any machine, with zero setup? That's a different kind of "better."

This is the same trade-off that's existed in web development for decades: the compiled, toolchain-heavy approach vs. the zero-dependency, view-source approach. AI tools didn't invent this tension -- they just made it visible by forcing the decision on every single prompt.

”The Convenience Loop: How AI is Killing Language Innovation

This brings us to the most uncomfortable implication of all of this.

GitHub's Octoverse 2025 report identified a pattern they called the "convenience loop." It works like this:

AI Tools Work Better

Models produce higher-quality output in languages with more training data. TypeScript, Python, Go, and Rust dominate.

Developers Notice

Productivity spikes when the AI assistant actually works. Autocomplete hits, generated code compiles, suggestions are contextually accurate.

Developers Choose Those Languages

Given the choice between a language where AI helps and one where it doesn't, developers pick the one that makes them faster.

More Code Gets Written

The chosen languages accumulate more repositories, more examples, more patterns on GitHub.

Models Train on More Data

Next training run ingests the new corpus. The dominant languages get even more representation.

AI Tools Get Even Better

More training data means better pattern matching, fewer hallucinations, higher confidence scores. Go back to step 1.

The winners of this loop are already clear: TypeScript, Python, Go, Rust. The losers are any new or niche language without a large existing corpus.

It doesn't matter how elegant the language design is, how much better the memory model, how much faster the compile times. If the AI assistant goes quiet when you switch to it, developers will switch back.

If your language doesn't have millions of code examples out there, Copilot won't be much help. And when Copilot doesn't help, developers pick something else.

”This is a genuinely new pressure on programming language adoption that didn't exist before 2022. The metric that used to matter was "how good is the language?" The metric that increasingly matters is "how much has the model seen of it?"

What About Python?

Python is the obvious counterexample. It dominates AI/ML work -- roughly half of all new AI repositories on GitHub start in Python, and it's nowhere near declining for model training, data pipelines, and research.

But the key distinction is what kind of coding we're talking about.

As the ecosystem matures and more developers are building with AI rather than training AI, TypeScript's gravitational pull increases. The question "what language does an AI tool default to?" is really the question "what language does an AI tool see most of, in the context of application development?"

And that answer is shifting toward TypeScript faster than most people realize.

The Short Version

Strip everything else away. There are two defaults, and both are mechanically determined:

Abundance x Signal Density x Feedback Speed x Ecosystem Defaults = the model always wants to generate TypeScript. But the delivery mechanism overrides that preference. If there's a build pipeline, you get React + TypeScript. If there's no compilation step, you get HTML + CSS + vanilla JS. If you're in a code editor with a local toolchain, you get TypeScript in whatever framework you're already using.

It's not a preference. It's physics constrained by plumbing.

The next time an AI tool hands you a single HTML file when you expected React, or a TypeScript component when you expected something simpler -- you're watching a large language model navigate the intersection of statistical confidence and runtime constraints. It's doing exactly what it was trained to do, filtered through what it knows you can actually execute.

And the convenience loop ensures that tomorrow, both defaults will be even more entrenched than they are today.

References

-

GitHub Octoverse 2025: TypeScript rises to #1 -- GitHub's annual developer report documenting TypeScript's 66% YoY growth, 2.6M monthly contributors, and the "convenience loop" pattern driving language adoption.

-

TypeScript's Rise in the AI Era: Insights from Anders Hejlsberg -- GitHub Blog interview where Hejlsberg describes LLMs as "big regurgitators with some extrapolation" and explains why AI tools create a vicious cycle against new language adoption.

-

Anders Hejlsberg: "AI is a big regurgitator of stuff someone has done" -- DevClass coverage of Hejlsberg's remarks on how training corpus size directly determines AI code generation quality.

-

LLM Code Generation Error Analysis (ICSE 2025) -- Academic study finding that 94% of compilation errors in LLM-generated code are type-check failures.

-

V0 by Vercel -- AI app builder with embedded React + TypeScript runtime and live preview.

-

Bolt by StackBlitz -- Browser-based AI agent for full-stack web application development.

-

Lovable -- Full-stack AI application platform generating real, editable source code from prompts.

-

Google AI Studio -- Google's web IDE for prototyping with Gemini models.